TL;DR

-

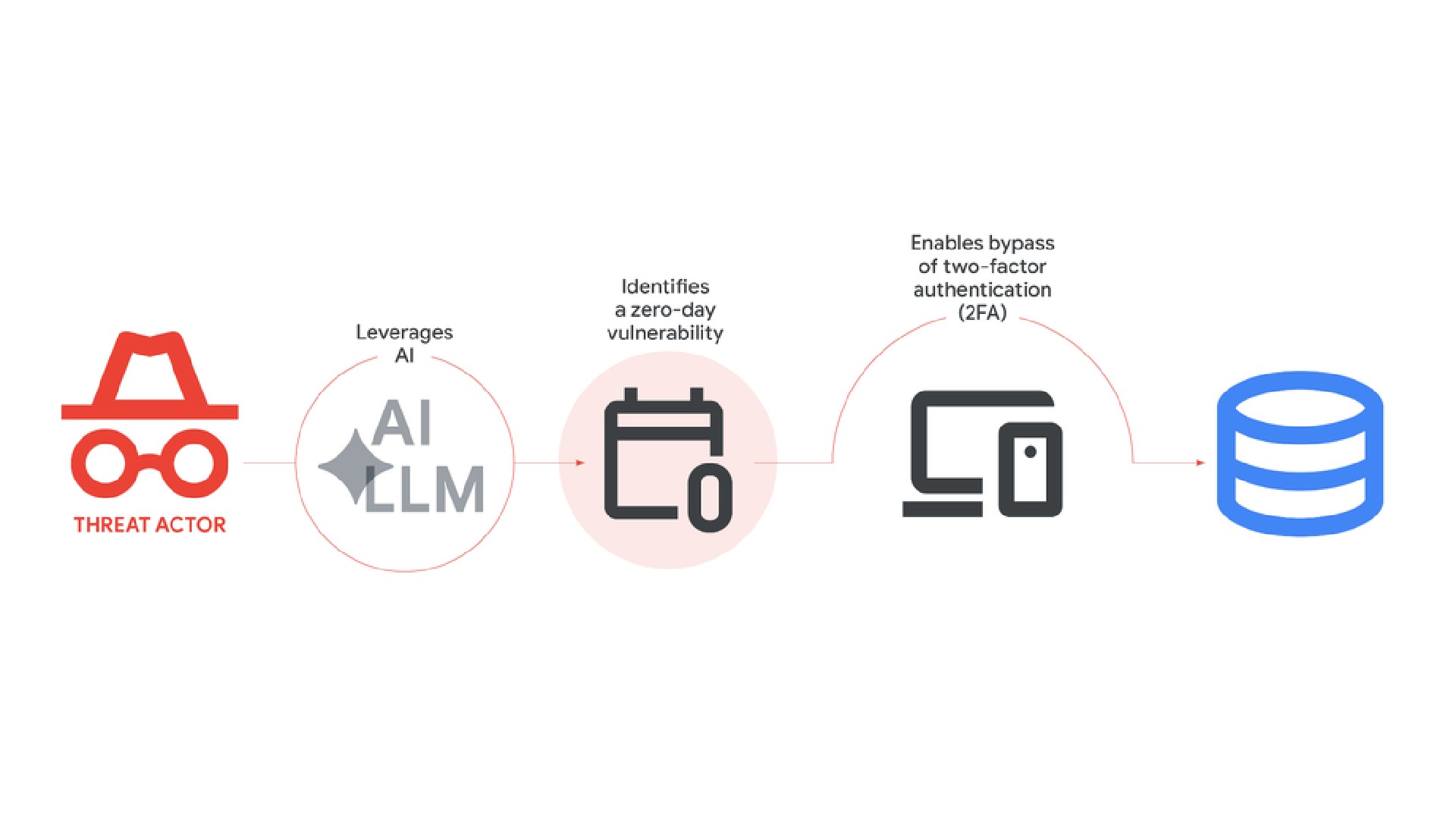

Google says cybercriminals are now using AI for much more than phishing emails, including discovering software vulnerabilities, creating malware, and automating cyberattacks.

-

One of the biggest concerns is the possibility of the first case of an AI-developed zero-day exploit, in which AI may have helped find or build an attack exploiting a hidden software flaw before developers could fix it.

-

AI-powered malware like PROMPTSPY can reportedly adapt to infected systems with minimal human control.

Artificial intelligence has spent the last couple of years making everyday life easier. It writes emails, summarizes meetings, edits photos, helps with homework, and somehow always knows what recipe you can make with leftover onions and a tomato in the fridge. But while most of us use AI to save time, cybercriminals are increasingly using it to make hacking faster, smarter, and far more scalable.

That’s the biggest takeaway from new findings shared by Google’s Threat Intelligence Group (GTIG). The company says AI is no longer just a side tool for attackers; it’s actually becoming part of the core system powering modern cybercrime. And that changes the conversation around AI quite a bit.

Don’t want to miss the best from Android Authority?

Until recently, many hackers were mostly experimenting with AI for tasks, such as writing phishing emails or generating fake messages. Now, attackers are reportedly using AI to discover software weaknesses, write malware, bypass security protections, and even automate parts of cyberattacks with very little human effort.

AI is moving from assistant to active participant in cybercrime

One of the most worrying examples involves what could be the first known AI-developed zero-day exploit. A “zero-day” vulnerability is basically a hidden software flaw that developers don’t know about yet, meaning there’s no fix available when attackers discover it.

According to the findings, AI may have helped identify or build an attack around one of these unknown flaws before it could be patched. That’s a pretty major shift because finding these vulnerabilities has traditionally required highly skilled researchers and a lot of time. AI could dramatically speed up that process.

The report also highlights growing interest from state-linked hacking groups connected to China and North Korea in using AI for exploit research and vulnerability discovery. This means governments and organized cyber groups are increasingly exploring how AI can help them break into systems faster and more efficiently.

Autonomous malware, on the other hand, feels really unsettling. Normally, hackers manually control different stages of an attack after infecting a system. But malware like PROMPTSPY can reportedly make some of those decisions on its own. It can analyze the infected device, adapt to different situations, generate commands dynamically, and react without waiting for constant human instructions. That means attackers may eventually be able to launch malware that behaves more like an independent AI agent than a malicious program.

The misuse of AI isn’t limited to hacking systems either. It’s increasingly used for online misinformation campaigns. Deepfakes, fake videos, AI-generated posts, and synthetic media are helping bad actors create what’s described as a “fabricated digital consensus” — basically making false information appear far more believable and widely supported than it actually is. One example mentioned is Operation Overload, a pro-Russia influence campaign.

Of course, AI is also helping defenders fight back. Google highlighted tools such as Big Sleep and CodeMender, designed to identify and fix vulnerabilities before attackers can exploit them. The company also says it actively removes malicious Gemini accounts involved in abuse campaigns.

Still, the larger takeaway here is hard to ignore. AI is no longer just helping cybercriminals work faster or automate repetitive tasks. It’s increasingly part of the infrastructure powering modern cyberattacks, and that shift is happening much faster than most people probably expected.

Thank you for being part of our community. Read our Comment Policy before posting.